Gustaf Ahdritz

I'm a fourth-year PhD student in Computer Science at Harvard University. I'm a member of the Machine Learning Foundations Group and am advised by Boaz Barak. I'm supported by a fellowship from Harvard's Kempner Institute.

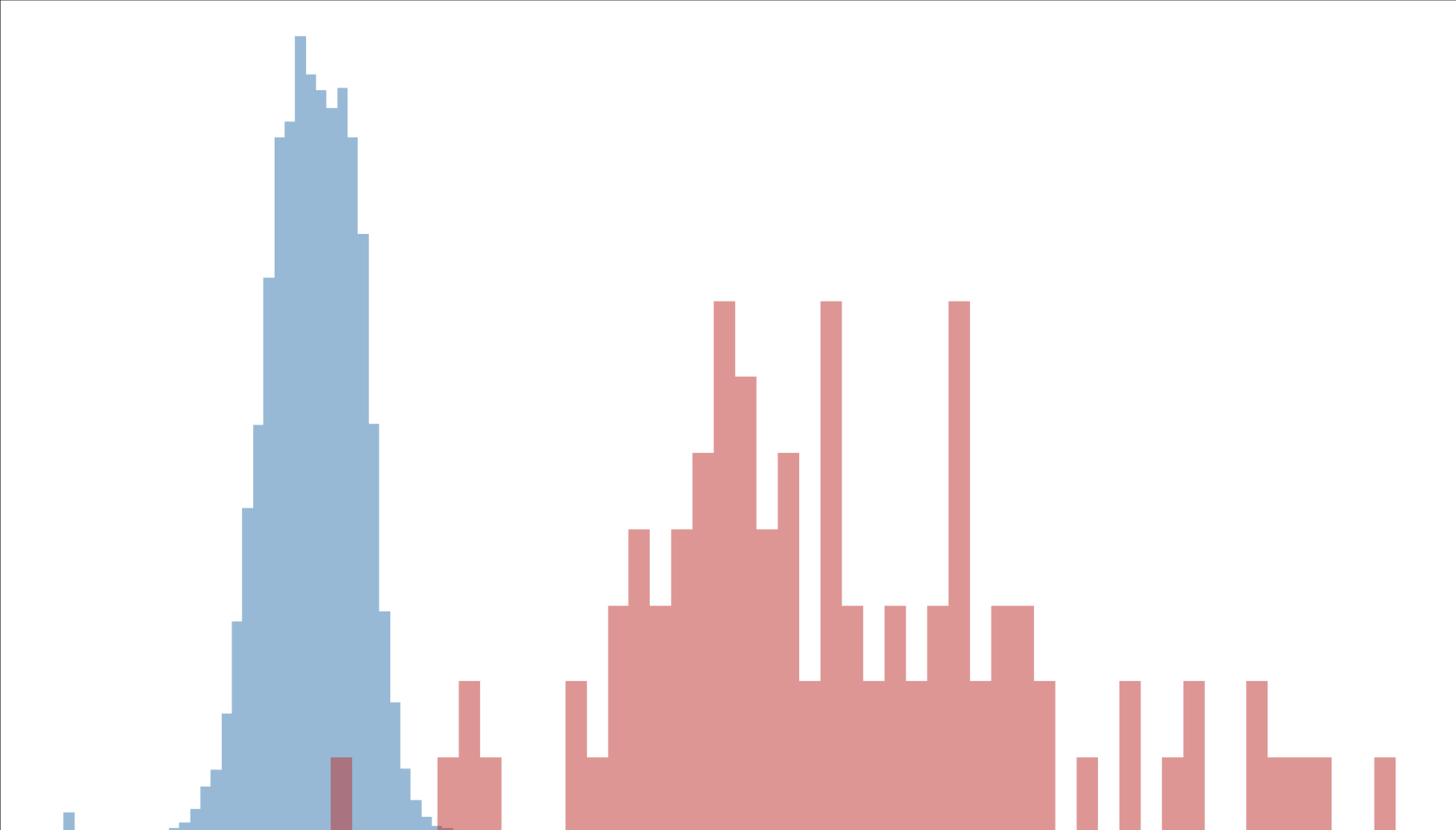

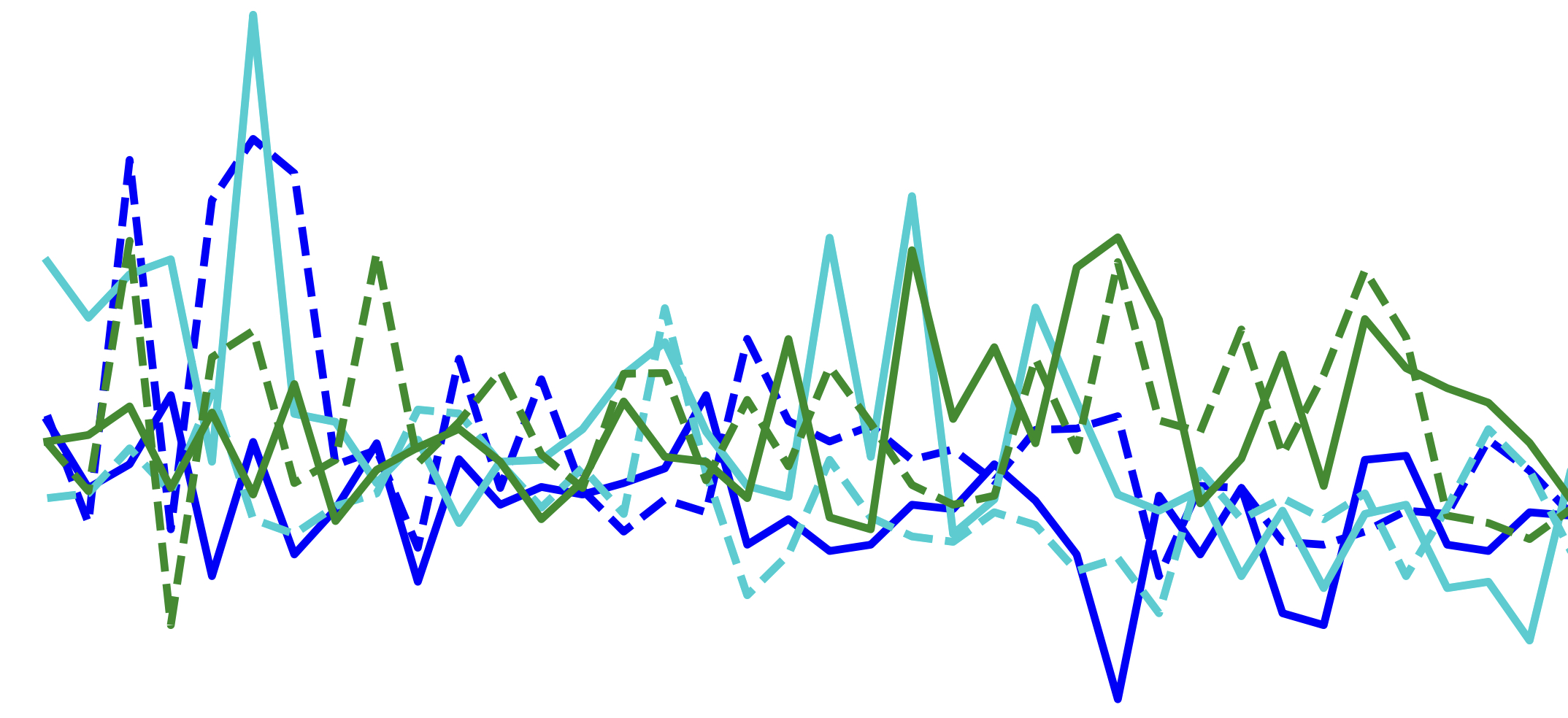

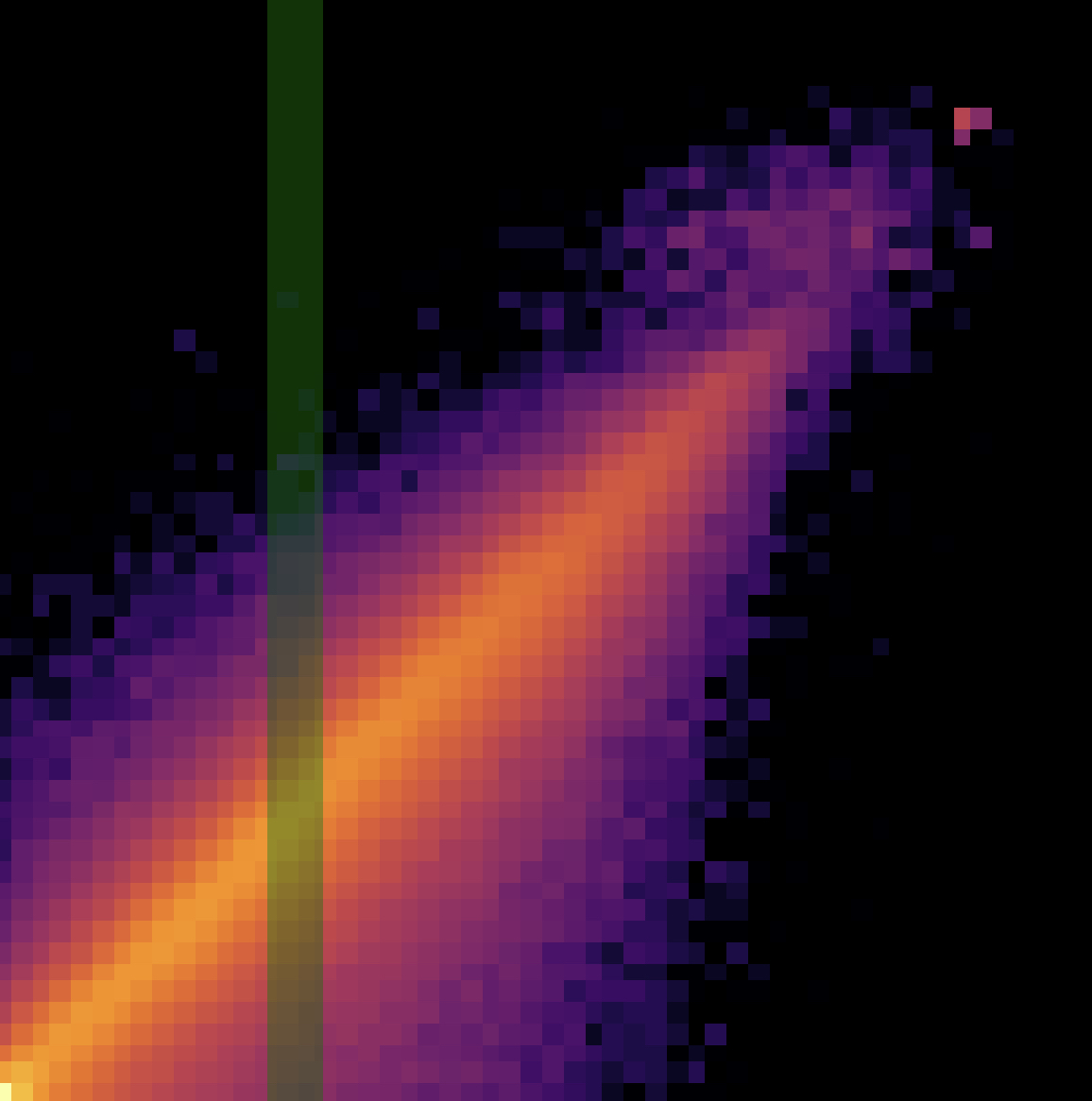

My primary research interest at the moment is uncertainty quantification, especially for large language models in realistic, unconstrained settings. Highlights include a new approach for automatically generating uncertainty labels in unconstrained text at scale, a rigorous framework for evaluating and improving uncertainty decompositions, and a general technique for enhancing uncertainty estimates for long-form LLM generations. Along the way, I've also worked on enabling real-time interactivity in LLMs and a benchmark measuring their ability to screen out untrustworthy information.

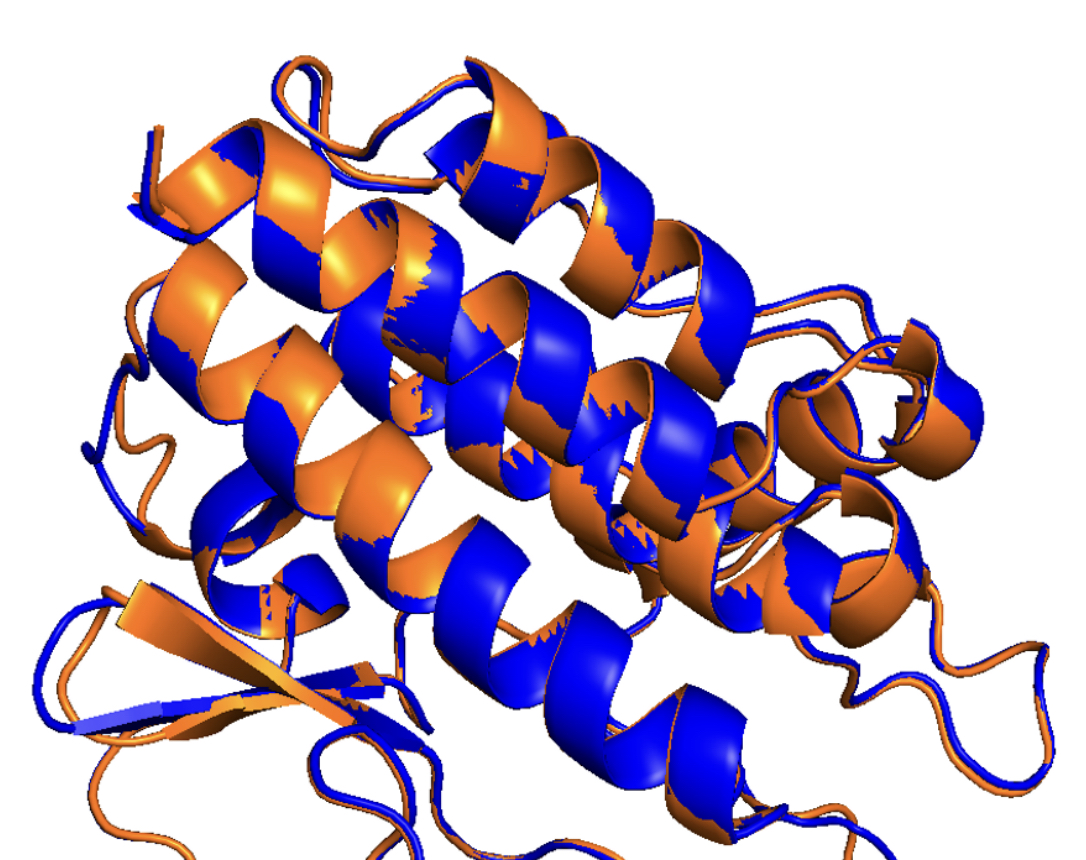

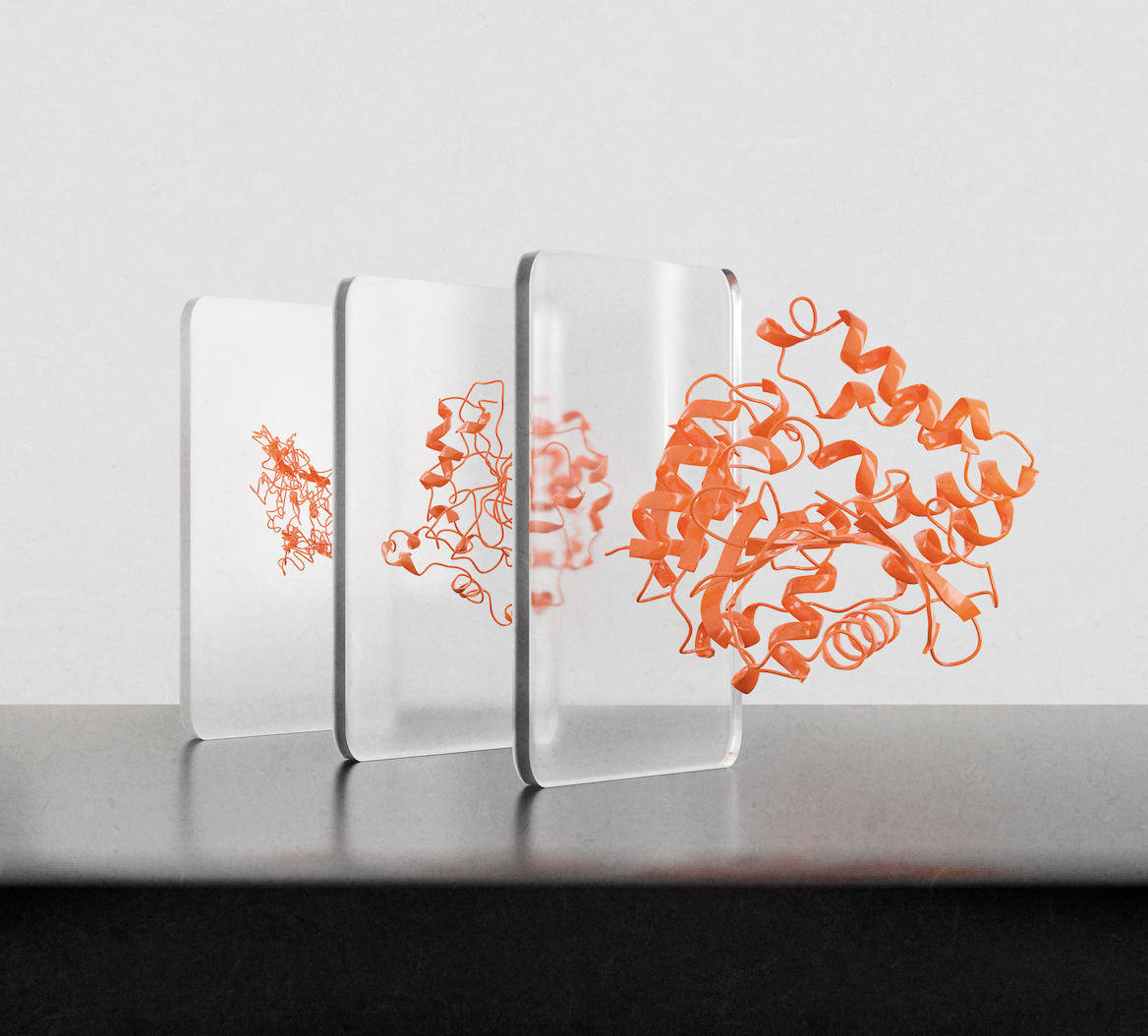

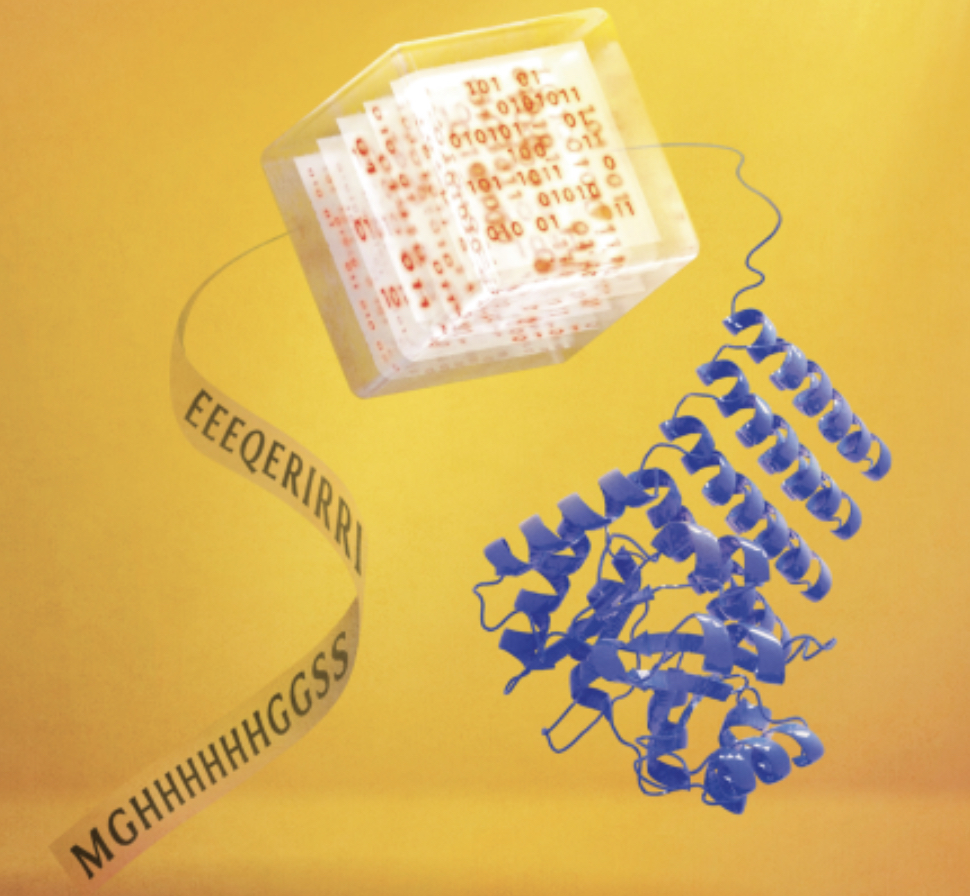

I interned at Meta in 2025 (working with Todor Mihaylov) and at Apple the previous year (Parikshit Gopalan, Udi Wieder, Moises Goldszmidt, and Anatoly Adamov (et al.)). Before that, I graduated from Columbia with a B.A. in Computer Science & History (2020) and an M.S. in Computer Science (2021). There, I worked with Mohammed AlQuraishi on the applied task of protein structure prediction and led the development of OpenFold.

Papers

Preprints

Conference Papers

Journal papers

Teaching

I've served as a teaching fellow/assistant for the following courses at Harvard and Columbia:

- Spring 2023: Foundations of Deep Learning (Harvard COMPSCI 229br) with Boaz Barak

- Spring 2019 - Spring 2021: Advanced Programming (Columbia COMS 3157) with Jae Woo Lee

Awards & Fellowships

- 2023 - 2028: Kempner Institute Graduate Fellowship (Kempner Institute, Harvard)

- 2022 - 2028: Ashford Fellowship (Harvard)

- 2020: Andrew P. Kosoresow Memorial Award for Excellence in Teaching and Service (Columbia SEAS)

- 2019: Dean Hawkes Memorial Prize (Columbia College)